The title of this post is the question behind a news article, “The Dunning-Kruger Effect is Probably Not Real,” by Jonathan Jarry, sent to me by Herman Carstens. Jarry’s article is interesting, but I don’t like its title because I don’t like the framing of this sort of effect as “real” or “not real.” I think that all these sorts of effects are real, but they vary: sometimes the effects are large, sometimes they’re small, sometimes they’re positive and sometimes negative. So the real question is not, “Are these effects real?”, but “What’s really going on?”

Jarry writes:

First described in a seminal 1999 paper by David Dunning and Justin Kruger, this effect has been the darling of journalists who want to explain why dumb people don’t know they’re dumb.

I [Jarry] was planning on writing a very short article about the Dunning-Kruger effect and it felt like shooting fish in a barrel. Here’s the effect, how it was discovered, what it means. End of story.

But as I double-checked the academic literature, doubt started to creep in. . . .

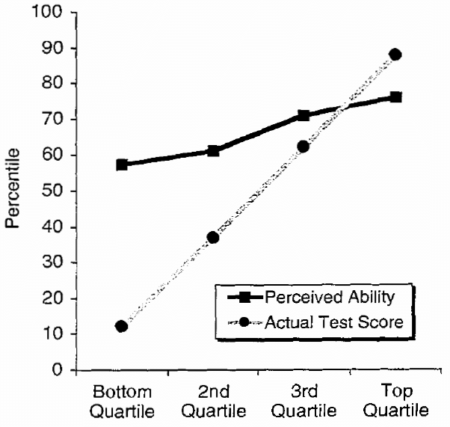

In a nutshell, the Dunning-Kruger effect was originally defined as a bias in our thinking. If I am terrible at English grammar and am told to answer a quiz testing my knowledge of English grammar, this bias in my thinking would lead me, according to the theory, to believe I would get a higher score than I actually would. And if I excel at English grammar, the effect dictates I would be likely to slightly underestimate how well I would do. I might predict I would get a 70% score while my actual score would be 90%. But if my actual score was 15% (because I’m terrible at grammar), I might think more highly of myself and predict a score of 60%. . . .

This is what student participants went through for Dunning and Kruger’s research project in the late 1990s. There were assessments of grammar, of humour, and of logical reasoning. Everyone was asked how well they thought they did and everyone was also graded objectively, and the two were compared. . . .

In the original experiment, students took a test and were asked to guess their score. Therefore, each student had two data points: the score they thought they got (self-assessment) and the score they actually got (performance). In order to visualize these results, Dunning and Kruger separated everybody into quartiles: those who performed in the bottom 25%, those who scored in the top 25%, and the two quartiles in the middle. For each quartile, the average performance score and the average self-assessed score was plotted. This resulted in the famous Dunning-Kruger graph.

Jarry continues:

In 2016 and 2017, two papers were published in a mathematics journal called Numeracy. In them, the authors argued that the Dunning-Kruger effect was a mirage. And I tend to agree.

The two papers, by Dr. Ed Nuhfer and colleagues, argued that the Dunning-Kruger effect could be replicated by using random data …

Hey—that sounds like regression to the mean: When evaluating a prediction, you should plot actual vs. predicted, not predicted vs. actual. We discuss this in Section 11.3 of Regression and Other Stories. If you plot predicted vs. actual, you’ll see that slope of less than 1, just from natural variation.

Jarry also writes:

A similar simulation was done by Dr. Phillip Ackerman and colleagues three years after the original Dunning-Kruger paper, and the results were similar.

Wait a second! So this isn’t news at all! The Dunning-Kruger claim was shot down nearly 20 years ago. From the abstract of the 2020 article by Phillip Ackerman, Margaret Beier, and Kristy Bowen, published in the journal Personality and Individual Differences:

Recently, it has become popular to state that “people hold overly favorable views of their abilities in many social and intellectual domains” [Kruger, J., & Dunning, D. (1999)] . . . The current paper shows that research from the other side of the scientific divide, namely the correlational approach (which focuses on individual differences), provides a very different perspective for people’s views of their own intellectual abilities and knowledge. Previous research is reviewed, and an empirical study of 228 adults between 21 and 62 years of age is described where self-report assessments of abilities and knowledge are compared with objective measures. Correlations of self-rating and objective-score pairings show both substantial convergent and discriminant validity, indicating that individuals have both generally accurate and differentiated views of their relative standing on abilities and knowledge.

I did some searching on Google Scholar and found this article in the journal Political Psychology from 2018, “Partisanship, Political Knowledge, and the Dunning‐Kruger Effect,” by Ian Anson, reporting:

A widely cited finding in social psychology holds that individuals with low levels of competence will judge themselves to be higher achieving than they really are. . . . Survey experimental results confirm the Dunning‐Kruger effect in the realm of political knowledge. They also show that individuals with moderately low political expertise rate themselves as increasingly politically knowledgeable when partisan identities are made salient.

It’s helpful for me to see this in the context of political attitudes as this relates more to my own area of research.

A quick Google search also led to this article from 2020 by Gilles Gignaca and Marcin Zajenkowski, “The Dunning-Kruger effect is (mostly) a statistical artefact: Valid approaches to testing the hypothesis with individual differences data,” which makes a point similar to a couple of other papers: Krueger and Mueller (Journal of Personality and Social Psychology, 2002) and Krajc and Ortmann (Journal of Economic Psychology, 2008).

Anyway, if all these analyses are correct, it’s interesting that people have been pointing it out for nearly twenty years in published papers in top journals, but the message still isn’t getting through.

One relevant point, I guess, is that even if the observed effect is entirely a product of regression to the mean, it’s still a meaningful thing to know that people with low abilities in these settings are overestimating their true abilities. That is, even if this is an unavoidable consequence of measurement error and population variation, it’s still happening. And, the “Dunning-Kruger effect” appears to have quite a following, at least based on the number of images available like the one below (with curve and witty commentaries).